Most teams do not need a more autonomous AI agent yet.

They need an operating model.

That sounds dry, but it is usually the difference between a clever demo and a system the business can trust. A demo only has to work once, in a controlled path, with friendly inputs. A production agent has to work on Tuesday afternoon when the queue is messy, the data is incomplete, a customer is annoyed, and someone needs to know exactly what happened.

That is where many AI agent projects get stuck. The team keeps debating models, orchestration frameworks, and tool choices. Those choices matter, but they are not the first bottleneck.

The first bottleneck is operational clarity.

Who owns the agent? What can it access? What can it change? When does it stop? Who reviews edge cases? How do you know whether it helped?

If those questions are vague, the agent is not ready for production.

Why an operating model matters now

The enterprise AI market is moving in a clear direction. Major platforms are no longer talking only about chatbots or isolated assistants. They are talking about agent deployment, governance, registries, observability, evaluations, access controls, and workflow-specific automation.

That shift matters for founders and operators.

It means the hard part is no longer proving that an AI system can do a task once. The hard part is proving that it can do the right work repeatedly, inside a real business process, with the right controls.

An AI agent operating model answers that question before engineering starts writing too much code.

It defines how the agent fits into the business. Not in theory. In the actual workflow.

Start with ownership

Every production agent needs a named owner.

Not a vague department. Not "the AI team." A person or role.

The owner does not need to write the prompts or build the integration. Their job is to own the business outcome and the operating rules. They decide what the agent is allowed to do, what it must escalate, and how success gets measured.

Without an owner, the agent becomes everyone's toy and nobody's responsibility.

That is fine in a sandbox. It is dangerous in production.

For example, imagine an agent that triages inbound sales requests. Marketing wants fast response times. Sales wants better qualification. Operations wants clean CRM data. Leadership wants more booked calls.

All of those goals are related, but someone has to decide the priority. If the agent has to choose between speed and qualification quality, what wins? If a request looks promising but lacks required information, should it create a task, ask a follow-up question, or route it to a human?

Those are ownership decisions.

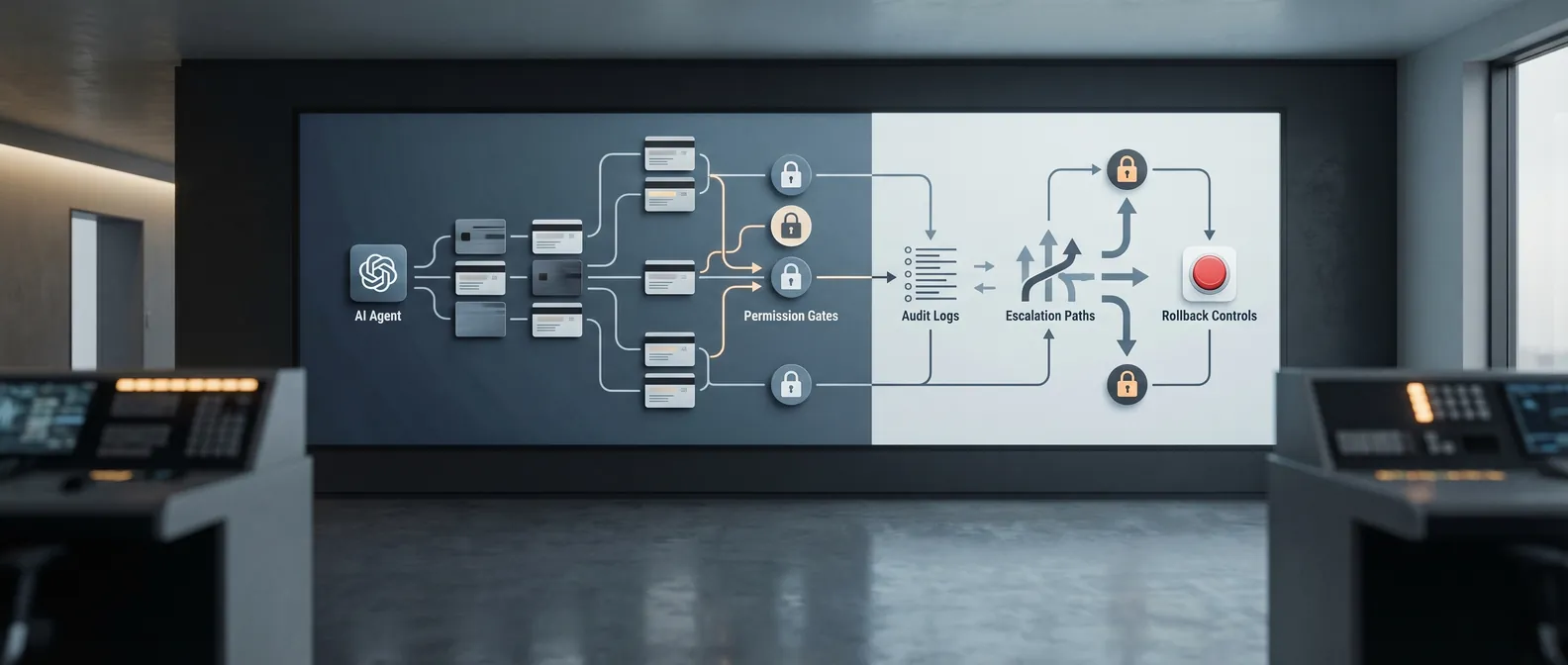

Define the agent's authority

The next question is simple: what can the agent do without asking?

This is where teams often get sloppy. They say the agent can "use the CRM" or "handle support tickets." That is too broad.

Write the permissions in plain language.

Can it read customer records? Can it update fields? Can it create a new ticket? Can it send a customer-facing message? Can it refund, cancel, approve, delete, invite, schedule, or commit the company to anything?

Different verbs carry different risk.

Reading is not the same as writing. Drafting is not the same as sending. Recommending is not the same as approving. Creating a task is not the same as changing an account.

A good operating model separates those actions clearly.

For an early production version, I usually prefer a narrow set of trusted actions:

- Read the records needed for the workflow

- Draft the next step

- Create internal tasks

- Tag or classify work

- Summarize context for a human

- Escalate anything outside the approved path

That may sound conservative. It is also how you get a system live without betting the company on a black box.

Put stop rules in writing

An agent without stop rules will eventually improvise in places where it should pause.

Stop rules are the conditions that force escalation. They are boring to write and incredibly useful when something odd happens.

Common stop rules include:

- Required data is missing

- The request involves legal, billing, security, medical, or compliance risk

- The customer asks for something outside policy

- The agent sees conflicting information across systems

- Confidence is low

- The action would change money, access, commitments, or permissions

- The workflow does not match an approved category

Do not bury these rules in code only. Put them in the operating model so business owners can review them.

This is where trust starts to form. People do not trust agents because the model is impressive. They trust agents when the boundaries are understandable.

Decide what gets logged

Agent observability sounds like an engineering topic. For production use, it is a business topic.

The business needs to know:

- What did the agent receive?

- What data did it look at?

- What tools did it call?

- What did it decide?

- What did it change?

- Who reviewed or approved the action?

- What happened after that?

If you cannot reconstruct the path, you cannot debug the workflow. You also cannot defend the process when a customer, auditor, partner, or executive asks why something happened.

Logging does not need to be fancy at first. Start with a readable timeline for each agent run. Capture inputs, tool calls, outputs, escalation reasons, human decisions, and final outcomes.

Then review the logs weekly.

That review is where you find the real product roadmap. You will see where the agent hesitates, where humans keep correcting it, where the input data is weak, and where the workflow itself is broken.

Evaluate against the workflow, not the demo

Most AI agent evaluations are too polite.

They test clean examples. They avoid weird edge cases. They measure whether the answer looks good instead of whether the work got done safely.

Production evaluation should be harsher.

Build a test set from real workflow examples. Include normal cases, edge cases, incomplete inputs, bad data, policy-sensitive requests, and situations where the right answer is "escalate."

Then score the agent on practical questions:

- Did it classify the work correctly?

- Did it use the right source data?

- Did it avoid restricted actions?

- Did it escalate when it should?

- Did it produce a useful handoff for the human?

- Did it reduce cycle time without increasing cleanup work?

Accuracy matters, but it is not the whole story. A system that gets the label right and creates three messy downstream tasks is not working.

Measure the workflow outcome.

Plan for rollback before launch

Rollback is easy to ignore until the first incident.

Before the agent goes live, decide what happens when it makes a bad change, sends a bad draft, misroutes a request, or produces output that a team should not use.

At minimum, define:

- Who can pause the agent

- Which actions can be reversed

- Where approval history lives

- How affected records are found

- What gets communicated internally

- What gets changed before the agent is turned back on

This does not need to be dramatic. It just needs to exist.

The worst version is a system that quietly changes business records and leaves the team guessing afterward.

The one-page operating model

You do not need a giant governance document to start.

For most first production agents, a one-page operating model is enough. It should answer:

- What workflow does this agent own?

- Who is the business owner?

- What systems can it read?

- What systems can it write to?

- What actions can it take without approval?

- What actions always require human review?

- What are the stop rules?

- What gets logged?

- How will the agent be evaluated?

- How can it be paused or rolled back?

If the team cannot fill that out, do not build yet.

If the team can fill it out, engineering gets much easier. The architecture has a shape. The prompts have boundaries. The tests have purpose. The humans know where they fit.

That is the point.

An AI agent is not just a model with tools. It is a worker inside a business process.

Give it an operating model before you give it more autonomy.

If you are trying to move an AI agent from demo to production and want a second set of eyes on the operating model, book a discovery call: https://calendly.com/martintechlabs/discovery

FAQ

What is an AI agent operating model?

An AI agent operating model defines how an agent works inside a business process. It covers ownership, permissions, stop rules, logging, human review, evaluation, and rollback before the agent is trusted with production work.

Why do AI agents need governance before production?

AI agents can read data, call tools, create records, and trigger downstream work. Governance makes those actions visible and bounded so the business knows what the agent can do, when it must stop, and who is responsible for the outcome.

What should an AI agent be allowed to do in its first production version?

Most first production agents should start with narrow authority. Reading records, drafting outputs, classifying work, creating internal tasks, and escalating exceptions are usually safer than letting the agent make irreversible changes on its own.

How do you evaluate an AI agent before launch?

Evaluate the agent against real workflow examples, including messy inputs and edge cases. Score whether it used the right data, avoided restricted actions, escalated correctly, and improved the business workflow without creating extra cleanup.