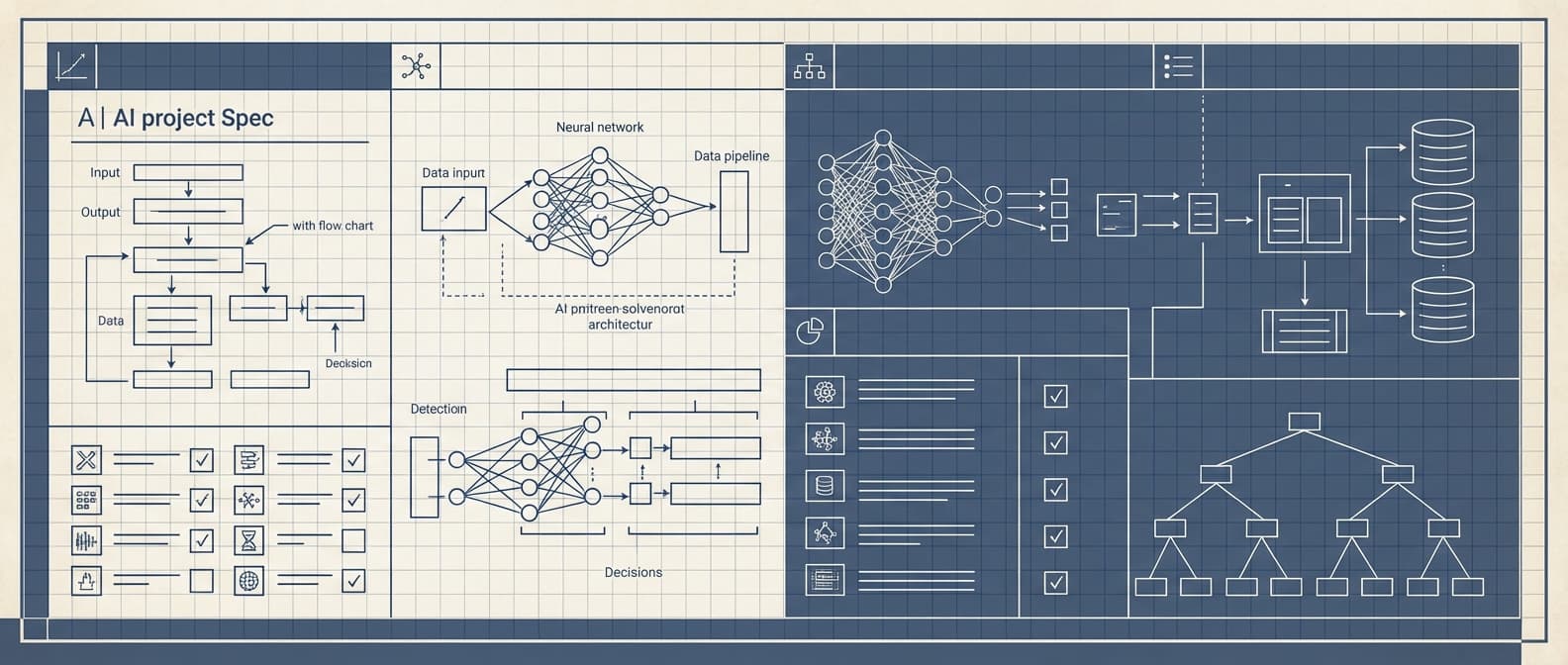

What to put in a technical spec before an AI build starts

Most AI projects start with a conversation and end with a discovery phase. What happens in between — the documentation of what you're actually building and why — often gets underweighted in the rush to start development.

A good technical spec isn't a formality. It's the document that prevents misaligned expectations, uncovers hidden requirements, and forces the clarity that prevents expensive changes later. Writing it carefully is one of the highest-ROI things you can do before anyone writes a line of production code.

Here's what we've found needs to be in a technical spec for an AI system, and what happens when each section is missing.

1. The problem statement

Not the solution — the problem.

This seems obvious, but it's surprisingly common to see AI project specs that jump straight to "we want to build a model that..." without a clear articulation of what problem that model is solving, why it matters, and what a world without it looks like.

A good problem statement includes: what manual process or decision is being automated, who does it today, how long it takes, what errors look like and how often they occur, and what the measurable cost of the current state is. If you can't describe the cost of the problem in concrete terms, you'll have a hard time evaluating whether the solution worked.

When this section is missing, projects drift. The team ships something technically functional that doesn't actually reduce the cost that motivated the project.

2. Input specification

What does the system receive? Be specific.

For every input type:

- Format (PDF, JSON, plain text, image, structured API response)

- Source (where does it come from and how does it arrive)

- Volume (how many per day, per hour, in burst)

- Variability (what's the range of what you'll see — different layouts, languages, quality levels)

- Edge cases (what inputs arrive that differ from the typical case, and how often)

The most common failure in this section is specifying the clean, typical case and not the distribution. A contract review system specified for "standard NDAs in PDF format" will encounter handwritten amendments, scanned documents with poor OCR, contracts in other languages, and variations you didn't anticipate. Specifying the edge cases upfront forces a design conversation about how they'll be handled, rather than discovering them in production.

3. Output specification

What should the system produce, and in what form?

This section should be precise enough that a developer could write a test for it. Not "the system should categorize documents" — "the system should return a JSON object with a category field from this defined set, a confidence score between 0 and 1, and an extracted_fields object with these specific fields present or null if not found."

Also specify: what happens when the system is not confident enough to return a result? What does the output look like when the input is outside the expected distribution? Underspecified output handling produces systems that return silent failures or incorrect results presented with false confidence.

4. Accuracy requirements and error tolerance

What does "good enough" mean for this system?

Define the accuracy threshold the system needs to meet to be deployed, and what types of errors are acceptable versus not. For a document classification system, a false positive (incorrectly classifying a document as type A when it's type B) and a false negative (missing a document that should be classified) may have very different costs. The spec should reflect that.

Also specify the human review path. For any AI system used in a real workflow, there's a confidence threshold below which the system should route to human review rather than return an automated output. Define that threshold explicitly, along with what the human review workflow looks like.

When this section is vague, teams end up building toward an undefined target and shipping systems that technically meet no agreed standard.

5. Integration points

What does the system connect to, and what are the constraints?

List every system the AI needs to read from or write to: databases, APIs, file storage, authentication systems, notification services. For each:

- What are the access credentials and authentication method?

- What are the rate limits or quotas?

- What is the data format and schema?

- What are the latency requirements for reads and writes?

This section tends to reveal the hidden complexity in an AI project. A system that "extracts data from invoices and updates the ERP" sounds simple until you're looking at the ERP's authentication model, its rate limits, its data validation rules, and the fact that its API documentation hasn't been updated in four years.

6. Performance requirements

How fast does it need to be, and what load does it need to handle?

Specify: expected request volume, acceptable latency at p50 and p99, maximum acceptable error rate, and what happens during peak load. Also: is this a synchronous system (user waits for a response) or asynchronous (result delivered later)?

LLM inference is slow relative to most software operations. A system designed for synchronous user-facing responses has fundamentally different architectural requirements than one designed for batch processing of overnight queues. This distinction needs to be made explicit.

7. Data handling and compliance requirements

How must data be handled, and what regulations apply?

At minimum: is any personal data involved, what are the retention requirements, who can access what, and are there regulatory constraints (HIPAA, GDPR, financial data regulations, attorney-client privilege) that affect where data can be processed or stored?

This section is the one most often skipped in early-stage specs and most often responsible for expensive late-stage rework when a legal or compliance review surfaces requirements that conflict with architectural decisions already made.

8. Success criteria and measurement plan

How will you know it worked?

Define the metrics that will be measured once the system is in production, how they'll be captured, and what thresholds constitute success versus failure. This section is what enables a meaningful evaluation after launch and what gives the team a clear target during development.

Without this section, "did it work?" becomes a judgment call made retroactively, often without the data to answer it clearly.

A note on spec completeness

Not every section will be fully known at the start of a project. That's fine. A spec that honestly documents what's known and what's still uncertain is more useful than one that fills in uncertain sections with assumptions that haven't been validated.

The goal of a good spec isn't to have all the answers. It's to make explicit what's known, what's unknown, and what decisions need to be made before development can proceed with confidence.

If you'd like a template or want to talk through what a spec for your specific use case should cover, book a discovery call.