How to Evaluate AI Workflow Automation Before You Buy a Platform

Most teams buy AI tooling in the wrong order.

They see a strong demo. Leadership gets interested. Procurement starts asking about platform options. Then the team tries to reverse-engineer a real business use case after the buying conversation is already underway.

That is backwards.

If you want AI workflow automation that survives contact with real operations, evaluate the workflow before you commit to the platform. The model matters. The tooling matters. But neither is the first question.

The first question is simpler: can this workflow be automated in a way that your team can actually trust?

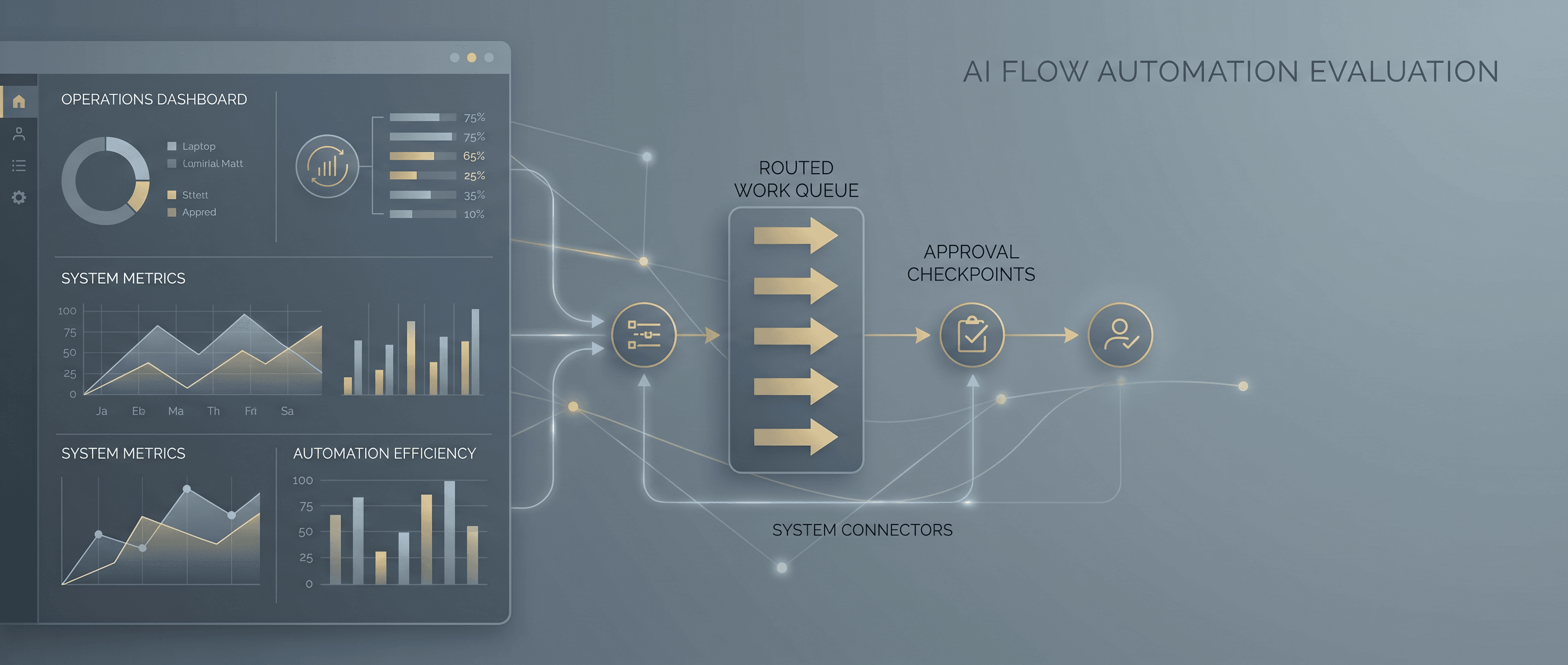

That is where the recent market signals are useful. OpenAI's April 8, 2026 enterprise note framed demand around agents working across company systems with the right permissions and controls. NIST's March 2026 work with GSA put more emphasis on AI evaluation before real procurement and workflow deployment. The pattern is clear. Buyers are getting less interested in raw capability and more interested in whether a workflow is bounded, testable, and governable.

That is a healthier way to buy.

Start with one workflow, not a platform category

"We need an AI platform" is not a useful starting point.

"We need help triaging inbound support tickets that currently sit for six hours before a human picks them up" is better.

"We need to extract fields from incoming vendor invoices, route exceptions, and push approved records into the finance system" is better.

Good evaluation starts with one painful workflow that already has:

- a visible queue

- a repeatable input

- a clear output

- a known handoff point into a system of record

If you cannot describe what comes in, what a good output looks like, and where the work should land next, the workflow is still too fuzzy. That is not a tooling problem. It is a scoping problem.

Build a small evaluation queue from real work

Do not test on the cleanest ten examples.

Pull a small queue of real tasks from the live workflow. Include the messy stuff. Include incomplete forms, weird formatting, contradictory records, and the edge cases your operators complain about.

I usually like a queue that includes:

- routine examples that should pass cleanly

- borderline examples that require judgment

- obvious exceptions that should escalate to a human

That mix tells you much more than a happy-path test.

The point is to learn whether the workflow can hold up when the inputs stop behaving.

Define pass-fail thresholds before you run the test

This is where a surprising number of teams get loose.

They start with a rough goal like "save time" or "improve triage." Then the test begins, and everyone quietly reinterprets success based on how much effort has already been spent.

Set the thresholds first.

For a workflow evaluation, I would want answers to questions like:

- What accuracy is good enough on the fields or classifications that matter?

- What percentage of cases should escalate instead of auto-completing?

- How much reviewer time is acceptable per completed task?

- What kinds of mistakes are tolerable, and which ones are disqualifying?

- What has to happen in the downstream system for the run to count as successful?

Write those down before anyone starts tuning prompts or comparing vendors. Otherwise you are grading on vibes, and vibes are expensive.

Test the handoff, not just the answer

A model can generate a decent summary. Fine. Can the workflow create a draft in the CRM with the right owner? Can it attach the source context? Can it route low-confidence cases into a queue that a human will actually check?

That is the real evaluation.

For most business workflows, the valuable questions are:

- Did the automation use the right source data?

- Did it produce output in a format the next system can use?

- Did it stop when the case crossed a risk boundary?

- Did the human reviewer have enough context to act fast?

- Did the workflow reduce cycle time without creating cleanup work later?

If the answer quality looks good but the handoff is clumsy, you do not have workflow automation yet. You have a smart helper sitting beside the workflow.

Keep human review in the test from day one

A lot of teams want to test autonomy immediately. I would not.

Early evaluations should make human review visible, not optional. You are trying to learn where the system is dependable, where it hesitates, and where it should stop. That means reviewers need to see the proposed output, the source evidence, and the reason a case was escalated.

If the workflow still needs a human to rewrite every output, the automation is weak. If the human mostly approves, corrects edge cases, and handles exceptions, now you are learning something useful. That review load is part of the economics, and it should be measured early.

Score the workflow like an operations change

Do not score it like a demo.

The question is not "did the model say something plausible?" The question is whether the workflow performs better than the current manual path without introducing more operational risk.

Useful evaluation metrics usually include:

- completion rate

- exception rate

- reviewer time per case

- downstream rework rate

- cycle-time reduction

- traceability of each run

Those numbers tell you whether the workflow is getting safer and cheaper, or just looking smarter.

This is why current enterprise AI messaging has shifted toward permissions, observability, and controls. Once a workflow can touch real systems, the quality bar changes.

Delay platform commitment until the workflow earns it

This is the part I would argue for most strongly.

Do not anchor on a vendor because the demo was compelling. Run the workflow evaluation first. Learn what the job actually needs:

- maybe the bottleneck is document quality, not model quality

- maybe the workflow needs a queue and rubric more than an agent framework

- maybe the team needs tighter permissions and rollback before more autonomy

- maybe a lightweight integration is enough, and a full platform is overkill

Once you know those things, the buying process gets easier. You can evaluate vendors against the actual job instead of a vague promise. You ask better questions. You spot bad fits faster. You avoid paying for surface area you will not use.

A simple evaluation checklist

Before you buy an AI workflow automation platform, make sure you can answer these questions clearly:

- What exact workflow are we testing?

- What queue of real work will we use?

- What counts as a successful output?

- What must escalate to a human?

- What system of record receives the result?

- What pass-fail thresholds are locked before the test starts?

- How will we measure reviewer effort and cleanup work?

- What evidence will we keep for each run?

- Who owns the workflow if it moves forward?

If those answers are still fuzzy, slow down. The workflow needs more design before it needs more software.

That is not hesitation. It is how good implementations avoid expensive rework.

If you are evaluating AI workflow automation and want help pressure-testing the workflow before you commit to a platform, book a discovery call.

Sources

- OpenAI, "The next phase of enterprise AI" (April 8, 2026): https://openai.com/index/next-phase-of-enterprise-ai/

- NIST, "CAISI signs MOU with GSA to boost AI evaluation science in federal procurement through USAi" (March 2026): https://www.nist.gov/news-events/news/2026/03/caisi-signs-mou-gsa-boost-ai-evaluation-science-federal-procurement-through