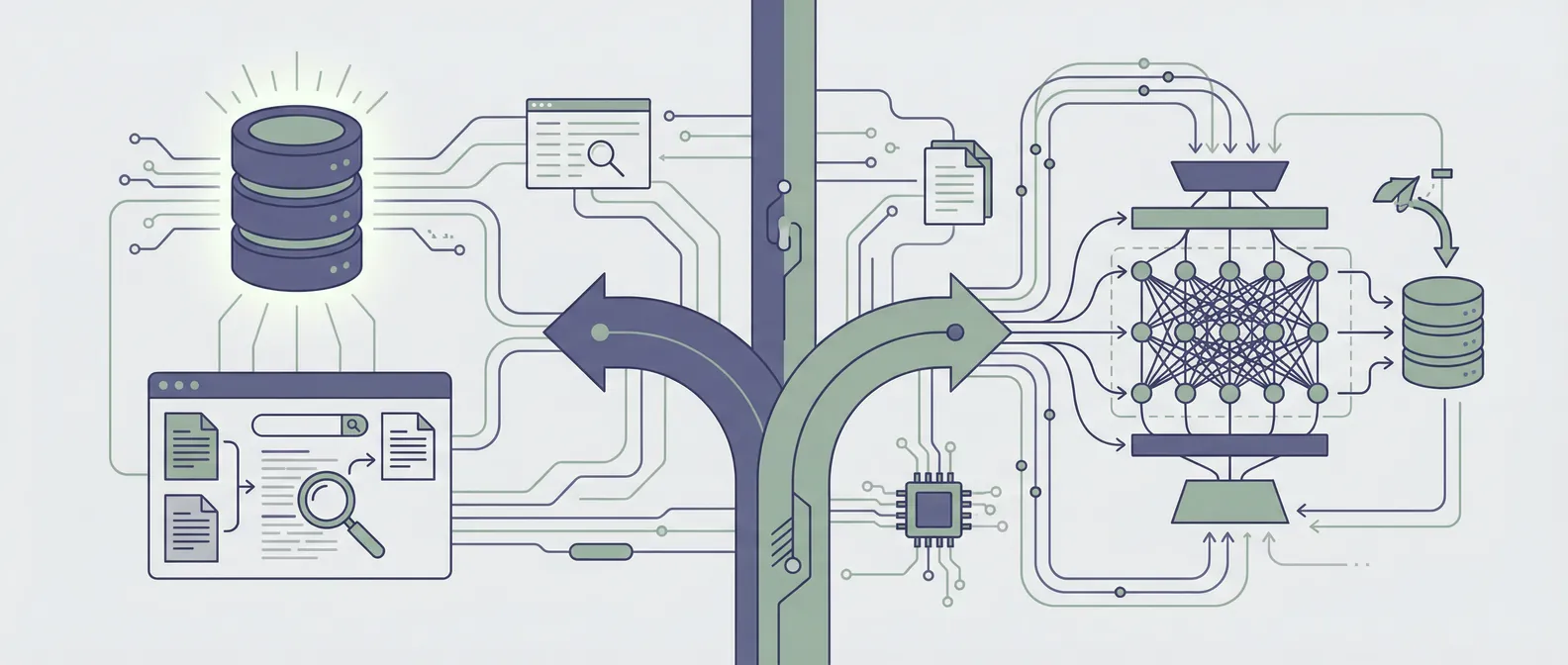

RAG vs. fine-tuning: how we decide which one to use

This is one of the most common questions we get early in a project. The client has a use case in mind, they've done some reading, and they want to know: should we do retrieval-augmented generation or fine-tuning?

The honest answer is that they're solving different problems, and choosing between them is usually less about capability and more about the specific nature of what you're trying to build.

Here's the framework we use.

What each approach actually does

Retrieval-augmented generation (RAG) connects a language model to an external knowledge source at query time. When a user asks a question, the system retrieves the most relevant documents or chunks from a database and includes them in the model's context window. The model then generates an answer based on both its pretrained knowledge and the retrieved content.

RAG doesn't change the model. It changes what information the model has access to at the moment it needs to answer.

Fine-tuning adjusts the model's weights by training on a dataset of examples specific to your domain or task. After fine-tuning, the model has internalized patterns from that data. It responds differently than the base model would, without needing that information provided at query time.

Fine-tuning changes the model itself.

When RAG is the right choice

RAG is the right answer in the majority of enterprise use cases we encounter. It's worth reaching for first unless you have a specific reason not to.

Your knowledge base is large, changing, or proprietary. If you need the model to answer questions about your internal documentation, your product catalog, your support history, or any corpus that gets updated regularly, RAG is the right fit. Fine-tuning on a static snapshot of that data would be out of date the moment anything changed. RAG keeps the knowledge current by retrieving from a database you control.

You need the model to cite or reference specific sources. RAG systems naturally produce answers grounded in retrieved documents. If your use case requires traceability, "here's the specific policy document this came from" or "this is the exact support ticket we're referencing," RAG makes that straightforward. Fine-tuned models don't work this way.

You're working with sensitive or proprietary data. Sending your data to a fine-tuning API means it leaves your environment. RAG lets you keep your knowledge base entirely in-house while still getting accurate, grounded answers.

You want to be able to update or audit the knowledge base. With RAG, you can add, remove, or correct information in the retrieval store and the model's behavior changes immediately. With a fine-tuned model, you'd need to retrain.

When fine-tuning makes sense

Fine-tuning solves a different class of problems. It's not about what the model knows. It's about how it behaves.

You need to change the model's style, format, or voice. If you want responses that consistently follow a specific structure, use your company's terminology, or adopt a particular tone, fine-tuning can bake those patterns in. RAG with system prompts can get you partway there, but fine-tuning makes it more reliable.

You're doing a well-defined classification or extraction task. If your use case is classifying documents into categories, extracting structured fields from unstructured text, or generating responses that follow a tight schema, a fine-tuned model often outperforms a RAG approach and is significantly cheaper to run per query.

Latency or cost at scale is a hard constraint. Fine-tuning on a smaller, faster model can be much more efficient for high-volume, narrow tasks than running a large model with retrieval. If you're processing millions of documents and the task is well-defined, a fine-tuned smaller model is often the right call.

Base model behavior doesn't match what you need. For specialized domains where general model behavior is genuinely insufficient and can't be corrected with prompting or retrieval, fine-tuning can help. This is rarer than people expect but does come up in highly technical or specialized domains.

The most common mistake

The most common mistake we see is reaching for fine-tuning because it sounds more sophisticated or permanent, when RAG would solve the actual problem faster and with better long-term maintainability.

Fine-tuning a model on a knowledge base, product docs, or FAQ content is almost always the wrong call. The data changes, the training is expensive, and you end up with a static snapshot baked into model weights that can't be easily updated or audited.

We've seen clients spend three to four weeks preparing fine-tuning datasets and running training jobs, only to discover that a well-built RAG system would have done the same job better and in half the time.

The cases where you actually want both

Some production systems use both. Fine-tuning handles style, format, or task-specific behavior; RAG handles dynamic knowledge retrieval. This combination shows up in support automation (fine-tuned for tone and response structure, RAG for product-specific content), legal document review (fine-tuned for legal reasoning patterns, RAG for jurisdiction-specific references), and similar use cases.

It's worth noting that "both" adds complexity. It's the right answer when you have clear requirements for both components, and not before.

A practical starting point

When we're evaluating a new use case, we ask a few questions early:

- Is the core problem "the model doesn't know X" or "the model doesn't behave like Y"?

- Will the underlying knowledge change over the next six months?

- Do you need the system to cite sources or show its work?

- What are the latency and cost requirements at production scale?

If the answers point toward "knowledge problem, changing data, needs attribution," that's RAG. If they point toward "behavior problem, fixed patterns, volume constraints," fine-tuning is worth evaluating.

If you're in the middle of this decision and want a second opinion on which approach fits your use case, book a discovery call. We've built both in production and are happy to share what we've seen work.