What a Production AI Sprint Actually Looks Like

Companies hire us for AI Sprints when they've already decided to build something. They have a problem, a rough idea of a solution, and a deadline driven by a board expectation or a competitive threat. What they don't have is the internal team to build it.

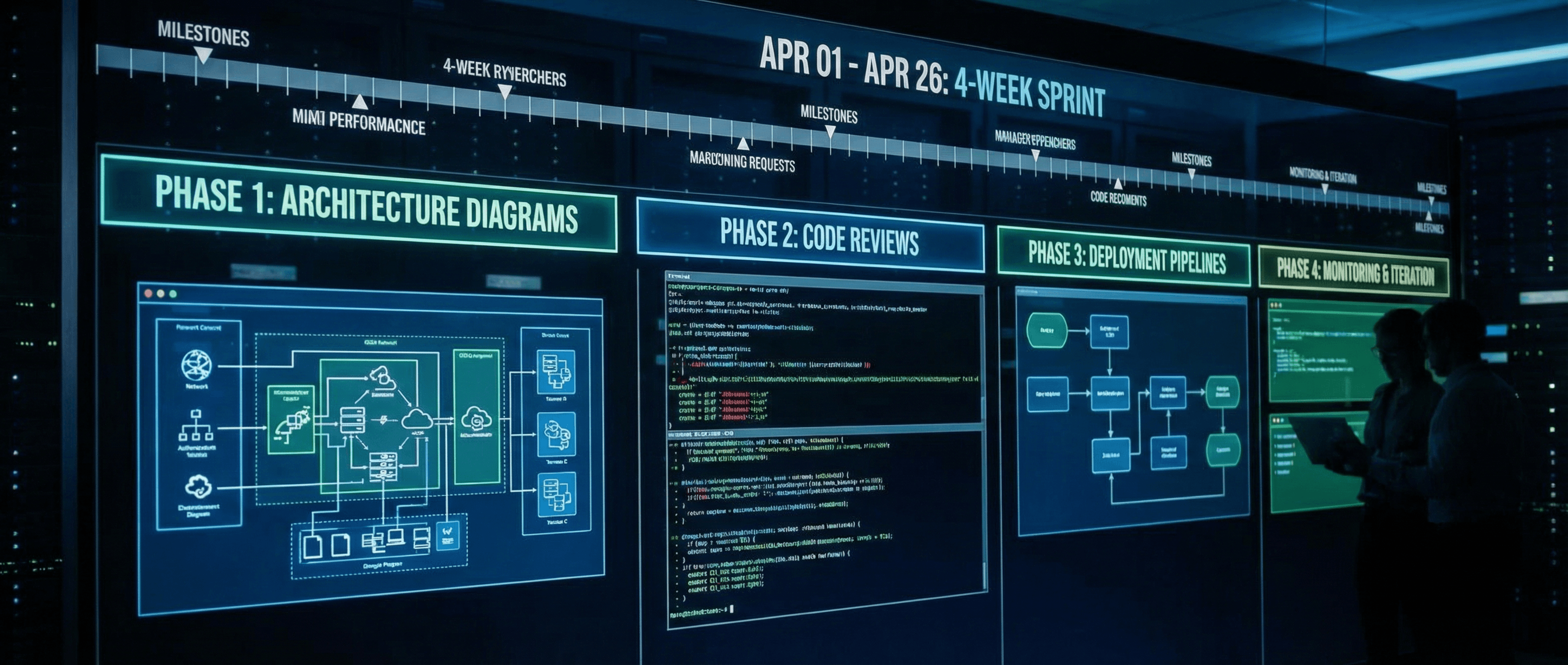

Here's what we do in four weeks.

Week 1: Scope and architecture

Most AI projects fail because the scope was wrong from the start. Week 1 is about getting scope right.

We spend the first few days understanding the problem in depth. Not the stated problem, but the real one. What data does the system need to work on? What happens when the AI gets it wrong? What does the edge case look like at 3am when no one is on call? Who owns the system after we leave?

These questions sound obvious. In practice, most teams haven't worked through the answers before they start building. I'd say that's true for more than half the clients we work with, even experienced ones. Doing this up front is where most of the value in the Sprint is created.

By the end of week 1, we have a written scope document, a data assessment (what's available, what's missing, what needs to be cleaned or transformed), and a technical architecture. Everyone on both sides has agreed on what we're building and why.

We also agree on what we're not building. Scope creep is the most predictable failure mode in any fixed-timeline engagement. Writing down the boundaries early, and being specific about them, is what makes the rest of the Sprint move.

Weeks 2-3: Build

This is the sprint. We build fast, but the goal isn't a demo. It's a production-grade system from day one.

That means the observability stack goes in from the start, not added at the end. It means input validation handles edge cases, not just the happy path. It means tests cover behavior, not just code coverage. We don't plan to go back and add these things later, because "later" in a fixed-timeline engagement is a fiction.

We deploy to a staging environment at the end of week 2 for client review. This is a real deployment, not a screen share. Clients interact with it, break it, and tell us what's wrong. That feedback loop, actual use against actual data, catches more issues in two days than a week of internal review. Every time. It's one of the things about this format I'm most convinced by.

We incorporate that feedback and spend the first part of week 3 fixing what came up. By the end of week 3, the system runs correctly against real inputs, the edge cases are documented, and the performance characteristics are understood.

A few things we've learned about building AI systems under time pressure:

Don't use models for things that don't need models. If a rule handles 90% of cases cleanly, use a rule. Save the model for the 10% where judgment is actually required. Mixed systems are harder to debug but often more reliable and faster than pure AI approaches.

The data pipeline is usually the hardest part. Building the AI logic is straightforward. Getting the right data into the right shape, from the production systems the client actually uses, in a way that handles schema changes and missing values. That's where Sprints slow down. We front-load data work in week 1 so it doesn't eat week 3.

Failure handling isn't optional. Every AI system will produce wrong answers. The question is what happens when it does. A system with good failure handling degrades gracefully and surfaces errors in a way operators can act on. A system without it fails silently until a user escalates.

Week 4: Harden and hand off

Week 4 is what separates Sprints that keep working from Sprints that become technical debt.

We start the week by reviewing everything the staging deployment surfaced. Most of the issues from week 3 client testing are small: UI edge cases, output formatting, a few latency problems under load. Some aren't. Week 4 is where we fix the real ones, even if it means revisiting an architectural decision we made in week 1.

Then we write the runbooks. A runbook is a document that tells whoever is operating the system how to handle specific failure scenarios. What to check when the model starts returning nonsense. How to restart the pipeline if it gets stuck. What the monitoring alerts mean and what to do when they fire. We write these based on what we actually saw during the Sprint, not generic best practices.

We run a knowledge transfer session with the team that's taking ownership. This isn't a demo. They've seen it running for two weeks. We walk through the architecture, the data pipeline, and the operational procedures together. Questions get answered in real time.

Finally, we define the monitoring thresholds. What latency is normal? What error rate should trigger an alert? How much drift in model outputs is acceptable before someone investigates? These numbers are specific to this system running on this data, and they're set before we leave.

What you get at the end

A working system. Not a proof of concept, not a demo, not a well-documented set of prototypes. Something that runs in production, handles real load, and has a team that knows how to operate it.

We've built RAG systems for healthcare companies that opened $5M revenue streams. Automation pipelines that replaced 200+ hours of manual work per month for operations teams. AI-assisted review workflows for legal and compliance functions. The throughline across all of them: they shipped, they ran, and the people who took ownership weren't starting from zero.

One more thing worth saying clearly: not every problem needs a Sprint. If you're still figuring out where AI can help your business, a Sprint is probably too early. Start with the AI Automation Audit: one week, and you'll leave with a specific list of where AI can move the needle and in what order. That context makes a Sprint much more likely to succeed.

The Sprint is the build. The Audit is where you figure out what to build.

The service ladder

For companies that move past a Sprint and need ongoing AI leadership, building the second system, managing the vendors, owning the roadmap, that's the Fractional AI CTO engagement. It's not right for everyone, but for early-stage companies without a technical co-founder who can own AI strategy long-term, it's how they scale without a full-time hire.

Each engagement is designed to be useful on its own. Audit to Sprint to Fractional CTO is a natural progression, but most clients start at whichever point is right for where they are.

If any of this maps to a problem you're working on, the best starting point is a 30-minute discovery call: Book a discovery call

Martin Tech Labs builds production AI systems for early-stage startups and mid-market companies. We move projects from stuck to shipped.