What an AI Agent Development Company Actually Builds

The term "AI agent" describes a lot of things that are not the same thing.

At one end, someone plugs a GPT API into a Slack bot and calls it an AI agent. At the other end, a team builds an autonomous system that monitors a manufacturing line, diagnoses equipment behavior in real time, and dispatches maintenance requests without a human initiating anything. Both get called "AI agents." The gap between them is enormous.

When companies look for an AI agent development company, they're usually trying to figure out which end of that spectrum they actually need. And whether the company they're talking to has shipped anything on the complex end.

Here's what serious AI agent development looks like, and what separates it from a chatbot with an expensive wrapper.

What an AI agent actually is

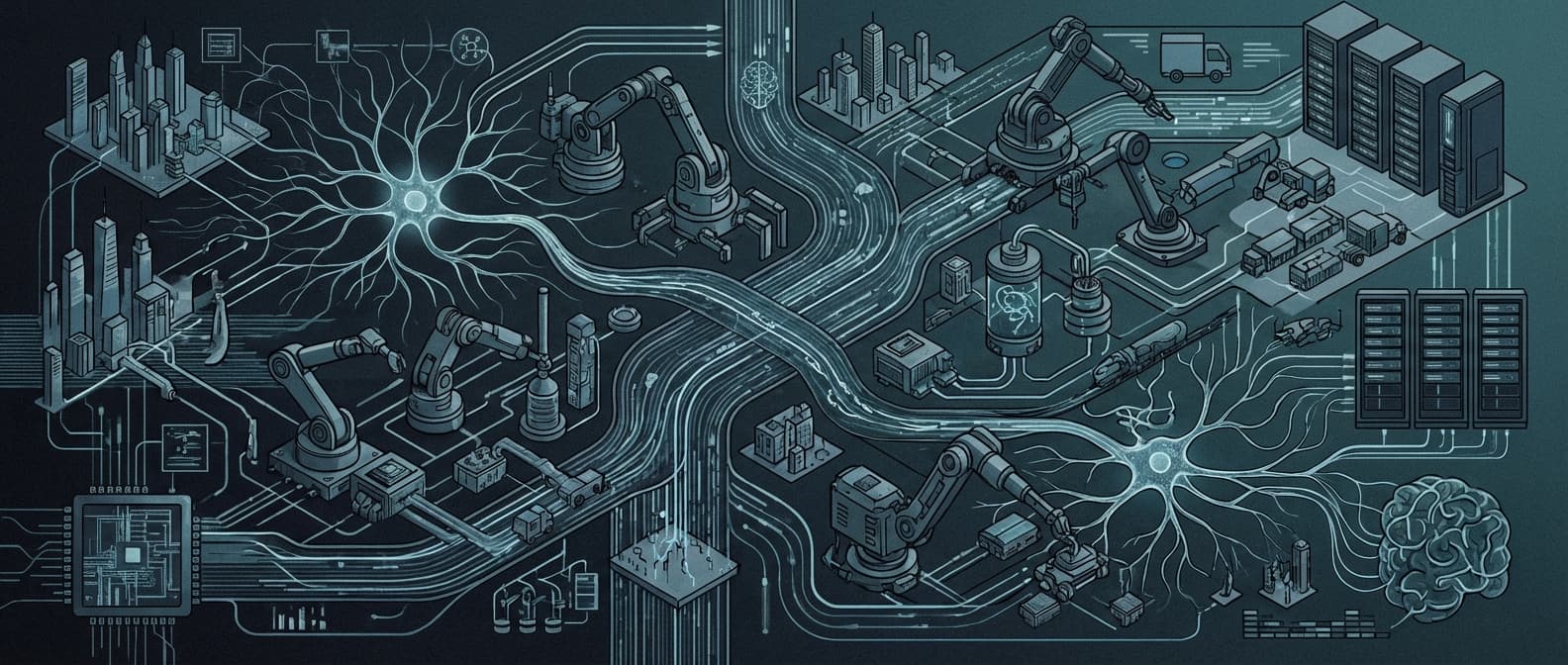

The word "agent" in AI has a specific meaning. An agent perceives inputs, reasons about them, and takes actions. In a loop, autonomously, without a human prompting each step.

A customer service chatbot isn't an agent in this sense. It responds to inputs, but a human initiates every interaction and the responses are mostly pre-structured.

A true AI agent:

- monitors a stream of inputs (documents, events, API feeds, sensor data)

- decides what to do based on what it sees

- executes actions: sends messages, updates records, triggers workflows, calls external APIs

- evaluates whether the action worked and adjusts

Most production AI agent systems are multi-step pipelines. The autonomous layer handles high-volume, predictable work. Humans stay in the loop for decisions with real consequences.

What companies are actually building

The most common production AI agent systems we work on fall into a few categories.

Document processing agents. A law firm processes several thousand contracts per week. The agent reads each document, extracts key terms, flags unusual clauses, and routes high-risk contracts to a senior attorney for review. The attorney sees a pre-populated summary instead of a blank document. What used to take a paralegal four hours per contract takes forty seconds.

This is the category we see most often, and honestly the most underrated. Clients consistently underestimate how much time their team spends on exactly this kind of work.

Workflow automation agents. A healthcare company has staff manually copying lab results from one system into another and then emailing updates to referring physicians. The agent monitors incoming lab data, matches results to patients, drafts compliant notifications, and sends them after a short review window. Staff time on that workflow drops by over 80%.

Monitoring and escalation agents. A SaaS company has thousands of customer accounts. Certain usage patterns predict churn, but nobody is watching logs closely enough to catch them early. The agent monitors usage events, scores accounts on churn risk daily, and creates tasks in the CRM when a score crosses a threshold. Account managers work from a prioritized list instead of guessing which accounts to call.

Research and synthesis agents. A financial services firm needs analysts to track regulatory updates across multiple jurisdictions and produce weekly summaries. The agent monitors feeds, summarizes new developments, cross-references them against internal policy databases, and generates draft report sections. Analyst time on this drops from a full day to under two hours.

These aren't demos. They're running in production, handling real volume, and their outputs affect actual business decisions.

What the development actually looks like

Building an AI agent is mostly not about the AI. The model is rarely the hard part.

Data pipelines. Agents need reliable, clean inputs. In most organizations, data is fragmented across systems that weren't designed to work together. Getting consistent, usable data into the agent is usually the hardest part of the project. A good agency spends significant time here before touching the model.

Failure handling. What happens when the LLM returns something unexpected? When a document is malformed? When an API times out? Production agents have explicit handling for every failure mode: logging, alerting, and fallback logic. PoC agents assume the happy path.

Evaluation. How do you know if the agent is working correctly? Most teams don't have a good answer. Serious AI agent development includes building evaluation sets: known inputs with known correct outputs, run on every model update or system change. Without this, you're flying blind.

Human-in-the-loop design. Where does a human need to review before the agent acts? What's the interface for that review? How fast does it need to be for people to actually use it? These decisions shape the whole system architecture and usually need to be figured out before writing any model code.

Cost at scale. LLM inference isn't free. For high-volume agents, costs accumulate fast. Production agents are designed to minimize unnecessary calls, cache where it's safe to, and route simpler subtasks to cheaper models. This is an engineering discipline, not an afterthought.

None of this shows up in a demo.

How to tell if an agency has done this before

Ask any agency you're evaluating to show you a production system they've built. Not a prototype. A system that runs live, handles real volume, and has been operating for at least six months.

Ask specifically:

- What does failure handling look like in this system?

- How is the agent evaluated after deployment?

- What does a maintenance issue look like, and how is it resolved?

- Who owns the system after you leave?

An agency that has shipped production AI agents will answer these questions easily, with specifics. An agency running PoCs and calling them agents will get vague.

The difference matters because building a production AI agent is much harder than it looks from the outside. The gap between a working demo and a working system is where most AI projects get stuck. The teams that have made that crossing before know where the complexity lives. The ones that haven't will find it during your project, and you'll pay for the education.

Martin Tech Labs builds AI agents that run in production. If you have a workflow problem that you think AI could solve, we'll tell you honestly whether it can, and what it would take to build it right. Book a call.