Most people building with AI start with one agent. That makes sense. You have a problem, you give an AI the tools to solve it, and you see what happens.

The trouble starts when that one agent has to do ten things and none of them are simple.

The single-agent trap

A single agent handling a complex workflow has a specific failure pattern. It works in demos. It works in testing with simple inputs. Then you put it in front of real data, real edge cases, a user who says something unexpected, and it starts doing things it shouldn't.

The typical fix is to add more instructions. Make the prompt longer. Add more examples. Add guardrails. After a few rounds of this, you have an agent with a thousand-line system prompt, unpredictable behavior in corner cases, and no clear way to debug what went wrong when it does fail.

That's not an AI problem. It's an architecture problem.

What multiagent systems actually fix

The insight behind multiagent architecture is straightforward: a focused system is a debuggable system.

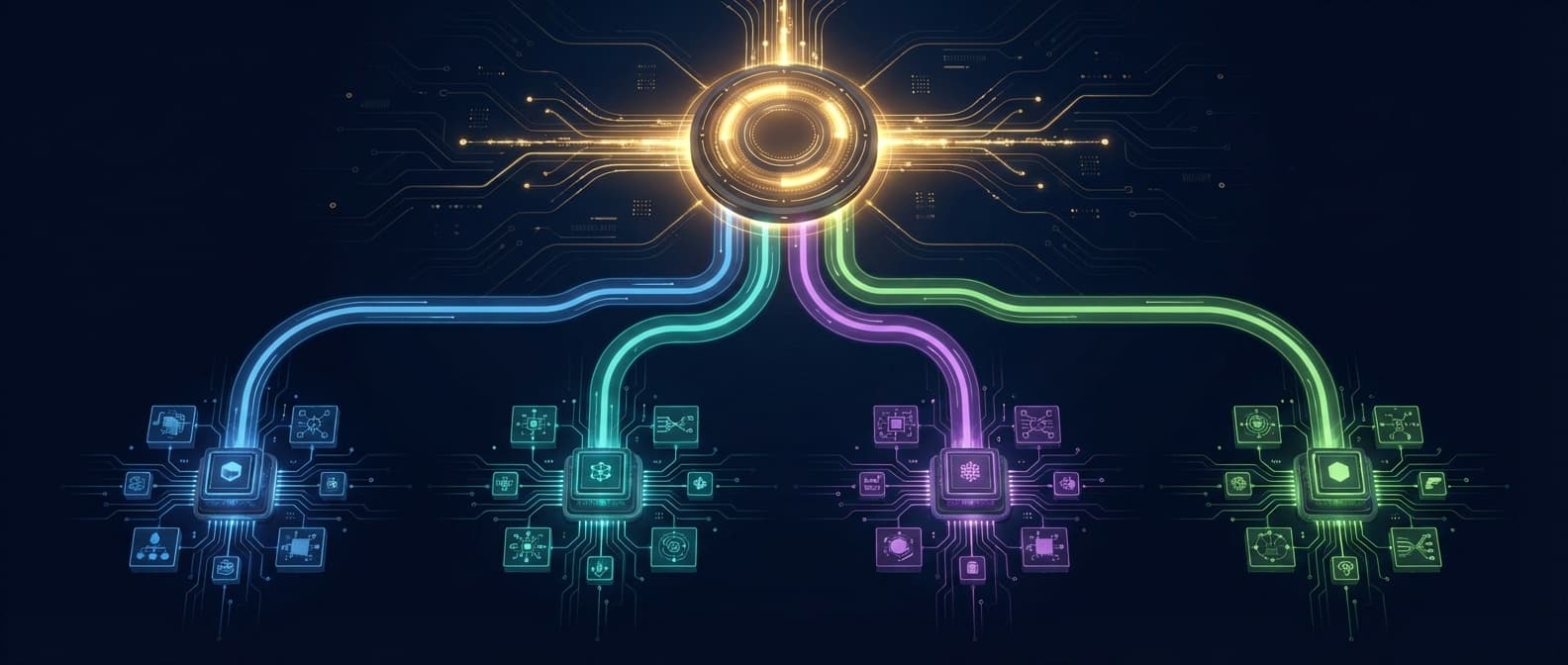

Instead of one agent that plans, executes, verifies, and handles errors, you build specialized agents for each role. The planner breaks work into steps. The executor handles one step at a time. The verifier checks results. An orchestrator routes work between them. Each component is small enough to test in isolation and observable enough to debug when something goes wrong.

This is not a new idea. It's the same reason microservices replaced monoliths in software engineering: not because distributed systems are simpler, but because the failure surface of each component is bounded.

The four roles worth getting right

Based on production systems, the roles that matter most are:

The planner. Takes a goal and breaks it into ordered steps with clear success criteria. This agent should not execute anything. Separation matters because planning logic and execution logic fail differently and need to be debugged separately.

The executor. Handles one step at a time, using tools, APIs, or other agents as needed. The scope of any executor should be narrow enough that you can write a meaningful test for it. If you can't, it's doing too much.

The verifier. Checks outputs before passing them downstream. Hallucination detection, format validation, business rule checks. This is the most skipped layer in first-pass builds and the most painful to retrofit.

The orchestrator. Routes work between agents, handles retries, escalates failures, and maintains shared state. This is the hardest layer to build and the most underestimated. Teams that underinvest here end up with agents that don't communicate effectively, produce conflicting decisions, and create accountability gaps no one can trace.

The orchestration layer is not optional

The pattern that causes the most production failures is treating orchestration as a nice-to-have. Teams focus on building capable individual agents, then connect them loosely and hope for the best.

What you actually need from an orchestration layer:

- A shared context store that all agents can read and write to

- A retry and fallback policy for each agent type

- Observable execution state so you can see what happened and when

- Clear escalation paths for cases no agent can handle

Without this, multiagent systems don't fail gracefully. They produce partial results, silent errors, and inconsistent outputs that are genuinely hard to trace. When something goes wrong, you don't know which agent failed, or why, or what state the system was in when it did.

What good looks like

A well-architected multiagent system has a few properties that are worth checking for before you ship:

Each agent has one job. If you can't describe what an agent does in one sentence, it's doing too much.

Failure is visible. Every agent should emit observable state. Logs, status updates, structured outputs that can be inspected. If an agent fails silently, you'll spend hours debugging edge cases you could have caught at the component level.

The orchestrator owns accountability. One component should be responsible for end-to-end task completion. Not a shared responsibility across agents. When something fails, you should be able to trace it to a specific decision point.

You can test components independently. If testing the full system is the only way to catch bugs, the architecture is wrong. Individual agents should be testable in isolation with mocked inputs.

When to move to multiagent

The honest answer is: not always. A single, well-scoped agent is the right choice for simple, bounded tasks. The complexity of orchestrating multiple agents is real and adds overhead that isn't worth it for a straightforward automation.

The signal that you need multiple agents is usually one of these:

- The single agent prompt is growing and behavior is becoming unpredictable

- The task involves fundamentally different types of work (planning vs. executing vs. verifying)

- Failures are hard to debug because you can't isolate what went wrong

- Latency is unacceptable because the agent is doing work that could run in parallel

If you're hitting these problems, adding more instructions to a single agent won't fix them. The architecture needs to change.

What this means for your build

If you're starting an agentic AI project and the workflow is genuinely complex, the conversation about architecture belongs at the start, not after the first version breaks in production.

The choices made early, how agents are scoped, what the orchestration layer looks like, how context is managed across steps, determine how debuggable and maintainable the system is long-term.

We've built production multiagent systems for clients in fintech, healthcare, and operations. If you're planning one, book a discovery call. One week, a clear recommendation on what to build and how to structure it.