Why AI Projects Fail Before They Ship

Most AI projects don't fail because the AI didn't work.

The models are good. The tooling has matured fast. You can build something impressive in an afternoon with the right APIs and a little code. The technology is rarely the limiting factor.

What kills AI projects is a different set of problems. They exist before a line of code gets written, and they compound quietly until the project runs out of time, money, or momentum. The hard part isn't building AI. It's building AI that runs in a real business, under real conditions, and keeps working after the demo.

These are the patterns we see most often.

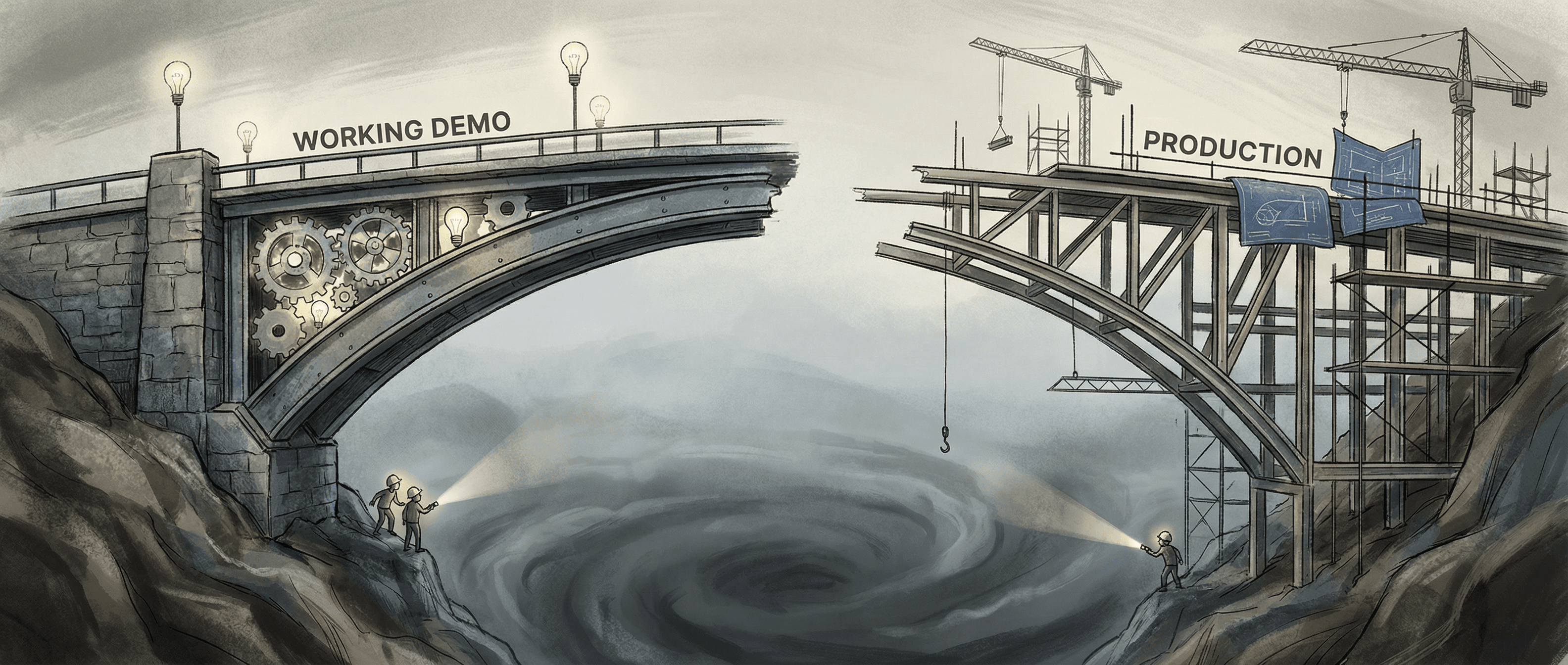

The demo-to-production gap

This is the one that catches the most teams off guard.

A team builds a proof of concept. It works well enough. Leadership sees it, gets excited, and the project gets funded. Then the team spends the next four months trying to turn that proof of concept into a production system, running into everything they didn't account for.

Production AI has requirements a PoC never faces. Real users generate edge cases the demo never saw. Real data is messier than the curated dataset used in testing. Real scale means the system that ran fine on a laptop slows to a crawl under load. Real stakes mean errors that were tolerable in a demo are now problems.

The teams that handle this transition well are the ones who built with production in mind from the start: designing for failure modes, building in observability, structuring the data pipeline before the model. The teams that struggle are the ones who built a demo and then tried to retrofit it into a production system.

There's no shortcut here. The only fix is front-loading the design work that most teams skip.

Scope that grows without the system evolving to match

AI projects attract scope. Once leadership sees what the system can do, the question becomes: can it also do this? And this? What about this use case we didn't think of before?

Scope expansion is normal in software. The problem specific to AI projects is that the underlying model and data infrastructure usually weren't designed for the expanded scope. The first version was built to handle three document types. Now someone wants it to handle seven. The original architecture made assumptions that don't hold anymore.

Teams respond by patching. An exception here, a workaround there. The system keeps growing, but the technical foundation stays frozen. After enough patches, you have a system that sort of works, but nobody wants to touch it, and every new feature takes twice as long as expected.

The pattern has an easy description and a hard solution: the architecture has to evolve with the product. That requires someone who understands both the business goals and the technical system well enough to know when a patch is fine and when it's storing up future pain.

That's why experienced technical judgment needs to stay close to product decisions, not just implementation.

Missing technical leadership at the right level

There are two different problems here that look the same from the outside.

The first is teams that have developers but no one responsible for the overall technical direction. The developers are competent. They build what gets specified. But no one is asking whether the architecture is right, whether the approach will hold up, or whether the requirements are actually buildable as written. Technical decisions get made by default. Whoever writes the code makes the call.

The second is leadership that understands the business but not the technology. They can define what they want. They can't evaluate whether what they're being told is true, whether the approach their team has taken is reasonable, or whether a problem that keeps getting pushed out is actually solvable. They end up dependent on their team's judgment with no independent check.

Both produce the same outcome: projects that drift. Features that don't quite work. Estimates that keep slipping. Technical debt that accumulates until it's unavoidable.

The fix is having someone who understands both the technical system and the business well enough to evaluate tradeoffs honestly. Not someone who defers all technical questions to the engineers, and not someone who defers all business questions to the stakeholders. Someone who can actually sit in the middle and make calls.

For many teams, especially at the growth stage, this is a fractional role. You don't need a full-time technical executive for every decision. You need someone who can establish the foundation, define the approach, review the critical choices, and course-correct when things drift.

Underestimating the data problem

Ask any team that's shipped an AI system in production what surprised them most. I've asked this question a lot. Data comes up almost every time.

The assumption going in is that the data exists, is organized, and is mostly clean. The reality is usually different. Data sits in multiple systems with inconsistent schemas. Fields that should match don't. The historical records that were supposed to train the model have gaps or labeling errors. The real-time data feed that feeds the system in production turns out to have edge cases nobody documented.

This doesn't make AI projects impossible. It makes them harder and slower than teams expect. And it has a specific effect on timelines: data problems tend to surface mid-project, after plans and commitments have been made.

Teams that manage this well do the data audit before they start building. They know what they have, where the gaps are, and what assumptions the model is making about the input data. They build in validation at the ingestion layer. They design the system with data quality in mind, not as an afterthought.

Teams that skip this end up doing the data work twice: once when they discover the problem, and again when the first fix turns out to be incomplete.

What actually works

The projects that ship do a few things differently.

They treat the discovery phase as real work. Understanding the problem, auditing the data, defining what success looks like: this is the work that determines whether the project is buildable, not the first sprint.

They design for production from the start. That means observability, error handling, and failure mode planning built in from day one. Not bolted on later.

They keep experienced technical judgment close to product decisions. Not to slow things down, but because the decisions that compound are the ones made early, under time pressure, by people who weren't sure what they were trading off.

And they scope tightly. The projects that fail usually tried to do too much in the first version. The projects that ship define a working first version, deliver it, and build from there.

If your AI project is stuck, or you're worried it's heading in that direction, we can usually tell in one conversation whether it's a fixable problem or something more fundamental.

Book a discovery call to talk through what you're working on. No pitch, no commitment. Just a direct conversation about what you're dealing with and whether we're the right help.

Related: What an AI agent development company actually builds