Why Your AI Rollout Needs an Operating Model

Most teams do not need another AI pilot.

They need an operating model.

That sounds less exciting than talking about models, agents, or autonomy. It is still the thing that usually decides whether an AI rollout turns into useful production work or another internal experiment nobody fully trusts.

The pattern is easy to spot. A team gets a good demo working. People see the upside. Leadership asks where else AI can help. Then the real questions show up:

- Who owns this workflow?

- What systems can the AI read?

- What is it allowed to change?

- Which actions need approval?

- What gets logged?

- Who can pause it if something goes wrong?

If those answers are vague, the rollout is not ready.

That is not just my opinion. The strongest public enterprise AI signals in April 2026 pointed in the same direction. OpenAI's April 8 enterprise update said customers want agents connected to company context and internal systems, but governed by the right permissions and controls. Anthropic's April 16 PayPal rollout session centered role-based access controls, spend caps, usage analytics, and granular connector controls. AWS's April 17 marketing case study showed a narrow workflow winning because validation, SEO checks, accessibility checks, and publishing governance stayed in the loop. NIST's AI Agent Standards Initiative, updated April 20, keeps pushing identity and authorization closer to the center of agent architecture.

The market is getting more operational.

That is useful for founders because it cuts through a lot of noise. If you want AI to do real work inside the business, the first question is not "Which model should we use?" It is "What is the operating model for this workflow?"

Start with ownership

Every production AI workflow needs a named owner.

Not "the business." Not "ops." Not "the AI team." A person or role.

That owner is responsible for the business outcome and the rules around the system. They decide what success looks like, what the AI can do on its own, what must escalate, and what tradeoffs matter when the workflow gets messy.

Without ownership, teams drift into a predictable failure mode. Everyone likes the upside, but nobody is responsible for the boundaries.

Take inbound lead qualification. Marketing wants faster routing. Sales wants better fit. Operations wants clean CRM updates. Leadership wants more booked calls. Those goals overlap, but they are not identical. If the workflow has to choose between speed and caution, someone has to make that call before the AI is turned loose.

That is operating-model work. It needs a name on it.

Define authority in verbs, not categories

This is where a lot of teams get sloppy.

They say the AI can "use the CRM" or "work in Zendesk" or "help publish content." That language is too broad to be safe or useful.

Production authority should be written in verbs:

- Can it read records?

- Can it draft an update?

- Can it create a task?

- Can it change a field?

- Can it send a customer-facing message?

- Can it publish?

- Can it approve, refund, invite, or delete?

Those verbs do not carry the same risk.

Reading is not writing. Drafting is not sending. Tagging is not changing a system of record. Recommending is not approving.

One reason Anthropic's PayPal rollout session was useful is that it was not framed as "here is a very smart model." It was framed around deployment controls that serious teams actually need: group-level access, spend limits, telemetry, and connector restrictions. That is much closer to how real rollout decisions work.

For most first production workflows, I still think the conservative version wins. Let the AI inspect approved records, classify work, draft the next step, summarize context, and create an internal task. Keep higher-risk actions behind review until the workflow proves it can survive real traffic.

That approach is not timid. It is how trust gets built.

Put stop rules in writing

If the only control is "someone will keep an eye on it," then there is no real control.

Every production workflow needs written stop rules. These are the conditions that force the AI to pause, escalate, or hand off to a human.

Common stop rules include:

- required data is missing

- records conflict across systems

- the request touches money, access, legal, compliance, or security

- confidence is low

- the workflow falls outside an approved category

- a tool call fails in the middle of a multi-step action

This is where a lot of pilots quietly fall apart. The workflow looks good when the input is clean and the path is obvious. Then it hits a case that needs judgment around policy, risk, or incomplete data, and nobody defined what "stop" looks like.

That is why I like the AWS publishing case study so much. The headline result was speed, but the more important detail was that quality checks stayed embedded in the workflow. SEO validation, accessibility requirements, and publishing governance were part of the design, not cleanup work after the fact.

The first production version should earn the right to expand. It should not start with maximum freedom.

Make the logs readable to the business

Observability gets discussed like an engineering concern. In practice, it is an operating concern.

A business owner should be able to answer a simple set of questions about any run:

- What triggered it?

- What data did it inspect?

- What tools did it call?

- What decision did it make?

- What changed in a system?

- What got escalated?

- Who approved the final step?

If you cannot answer those questions, you do not really know how the workflow behaved. You only know that it produced an output.

That is not enough when the AI is touching queues, records, customer communication, or internal approvals.

NIST's current work on agent identity and authorization matters here because it reflects a broader shift. Enterprises are no longer treating AI as just a response engine. They are treating it as something that may act on behalf of users, across systems, with real consequences. That makes identity, permissions, and traceability part of the product, not side documentation.

You do not need a giant observability stack on day one. A readable run timeline is enough to start. Capture the input, the tools used, the proposed action, the escalation reason, the approval step, and the outcome. Review real runs every week.

That review loop is where the useful roadmap appears. You see where the data is weak, where the stop rules are too loose, where humans keep correcting the same output, and where the workflow itself is still poorly scoped.

Evaluate the workflow, not just the answer

One of the easiest mistakes in AI rollout is grading the system like a demo.

The answer looked good. The draft sounded plausible. The classification was mostly right.

Fine. Did the workflow actually work?

For a production evaluation, I would want to know:

- Did it use the right source data?

- Did it avoid restricted actions?

- Did it escalate at the right time?

- Did the human reviewer have enough context to act quickly?

- Did the workflow reduce cycle time without creating more cleanup work later?

That is a better test than asking whether the model sounded smart.

OpenAI's April 8 enterprise note was useful for this reason too. The example was not "ask the AI anything." It was an agent that researches inbound prospects, scores them against a rubric, drafts outreach, and updates the CRM. That is a business workflow. Once you frame the work that way, evaluation gets more concrete. You can score the whole path, not just the sentence quality.

The one-page operating model

For most early-stage teams, this does not need to become a giant governance program.

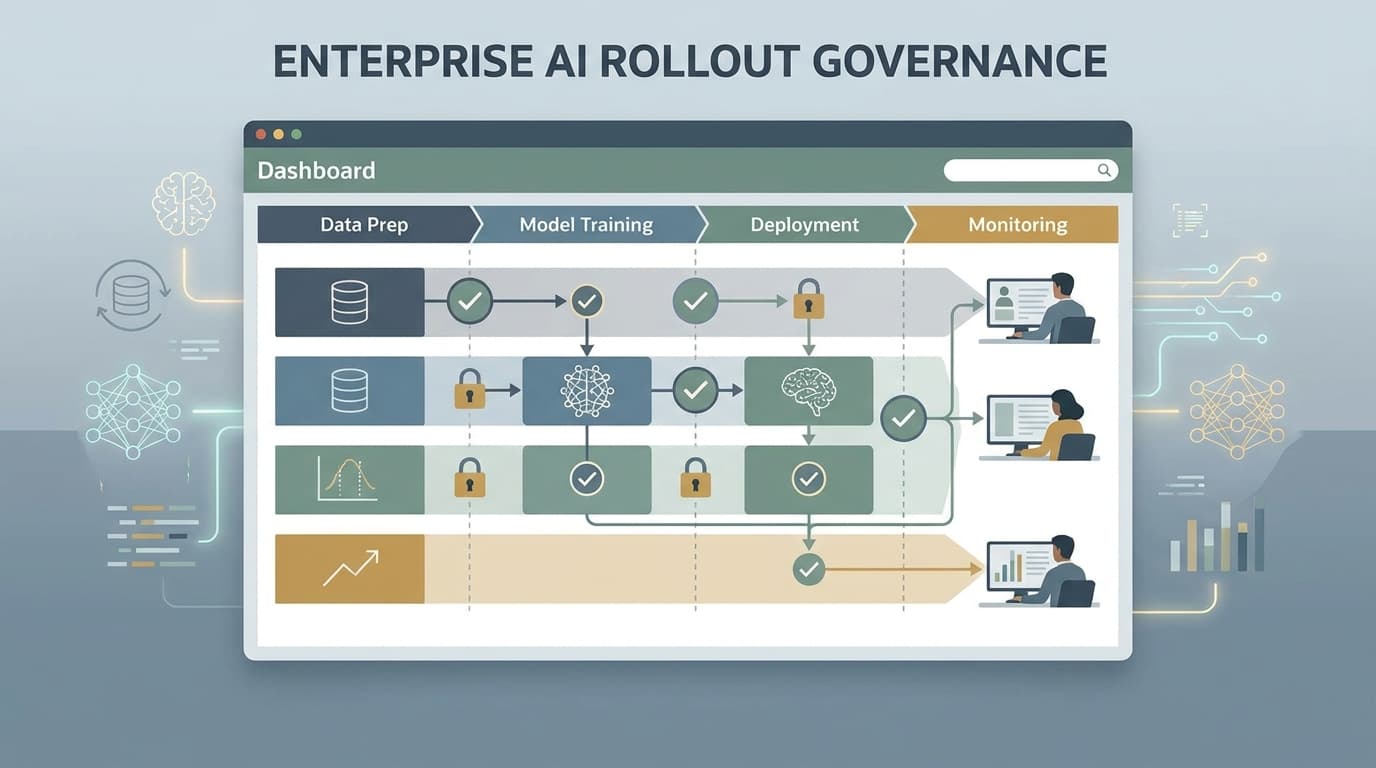

A one-page operating model is usually enough for the first real workflow. It should answer:

- What workflow is being changed?

- Who owns the business outcome?

- What systems can the AI read?

- What systems can it write to?

- Which actions are allowed without approval?

- Which actions always require review?

- What are the stop rules?

- What gets logged for every run?

- How is the workflow evaluated?

- Who can pause it or roll it back?

If the team cannot fill that out, I would not call the workflow production-ready.

If the team can fill it out, engineering gets easier. Prompts get tighter. Permissions get clearer. Test cases stop being abstract. Reviews move faster because everyone knows where the boundaries are.

That is the real point.

AI rollout is starting to look less like a lab exercise and more like operating design. The teams that understand that are the ones with a better chance of shipping something the business can actually trust.

If you are trying to move from pilot to production and need help defining ownership, approvals, permissions, and rollout boundaries, book a discovery call.

Sources

- OpenAI, "The next phase of enterprise AI" (April 8, 2026): https://openai.com/index/next-phase-of-enterprise-ai/

- Anthropic, "Deploying Claude Cowork across the Enterprise, with PayPal" (April 16, 2026): https://www.anthropic.com/webinars/deploying-cowork-across-the-enterprise-with-paypal

- AWS, "From hours to minutes: How Agentic AI gave marketers time back for what matters" (April 17, 2026): https://aws.amazon.com/blogs/machine-learning/from-hours-to-minutes-how-agentic-ai-gave-marketers-time-back-for-what-matters/

- NIST, "AI Agent Standards Initiative" (created February 17, 2026, updated April 20, 2026): https://www.nist.gov/caisi/ai-agent-standards-initiative