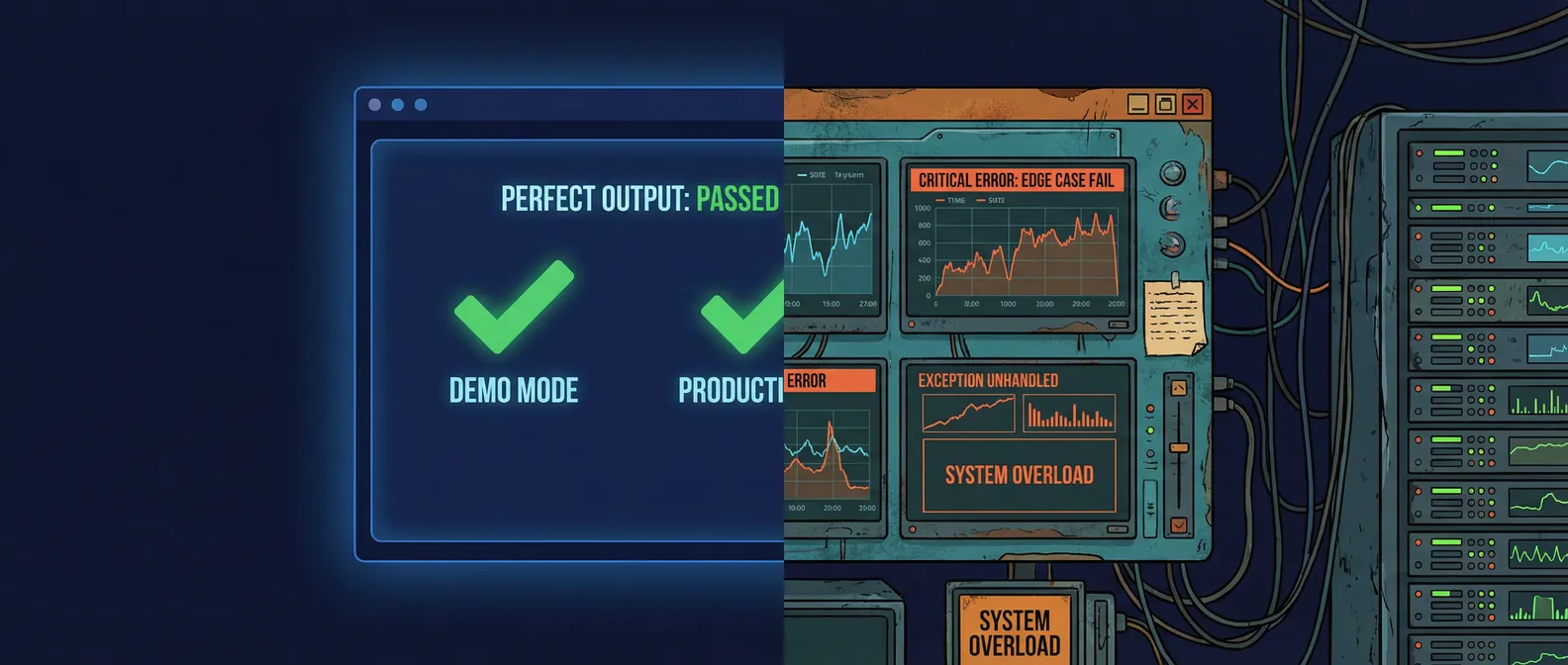

Every AI agent demo looks good. The inputs are clean, the task is well-defined, the model performs exactly as expected. The team is excited.

Then it goes live.

The failure modes that appear in production almost never appear in demos. Not because the demos are dishonest, but because demos are designed to show what a system can do, not what it can't handle. Production is the opposite problem.

Here is what separates an AI agent that ships from one that stays in a staging environment forever.

Why demos lie

A demo is a happy path. The input is well-formed, the context is exactly what the agent was designed for, and nobody is trying to use it in a way the developer didn't anticipate.

Production has none of those guarantees. Real users ask questions that are ambiguous, incomplete, contradictory, or outside the scope the agent was designed for. Real data has inconsistencies that test sets don't. Real systems have latency, rate limits, and transient failures that a local development environment doesn't simulate.

This isn't a criticism of anyone who builds demos. It's a structural property of the difference between a controlled test and an uncontrolled environment. The gap exists for every AI agent, and closing it is the actual work of shipping.

The five things a production agent needs that a demo doesn't

Graceful handling of inputs outside the expected range. A demo agent gets inputs that are in scope. A production agent gets every input a user can imagine sending. Some of those inputs will be malformed, off-topic, adversarial, or just weird. A production agent needs to detect when it's outside its competence and respond appropriately, not hallucinate a confident answer.

This is mostly a prompt engineering and evaluation problem. You need a test set that includes out-of-scope inputs, and you need a response strategy for each failure mode.

Retry logic and fallback behavior. Production systems call external APIs that go down, return errors, or time out. An agent that has no fallback when a tool call fails will stall or crash in ways that a demo, running against a stable local environment, never reveals.

Every tool call in a production agent needs explicit error handling: retry with backoff for transient failures, fallback behavior for persistent ones, and a clear failure state that tells the system what went wrong.

Observability. In a demo, you can watch the agent work. In production, you're not in the room. You need to know what the agent is doing, what decisions it's making, and where it's failing without watching every interaction.

This means structured logging of every tool call, every model response, and every decision branch. It means metrics on latency, error rates, and output quality. It means alerts when something drifts from expected behavior. An agent without observability is a black box, and black boxes are unmanageable.

Cost controls. Demo agents run a handful of interactions. Production agents run thousands. A prompt that costs $0.08 per call doesn't matter in a demo. At 10,000 calls per day, that is $800 daily. Token usage, model selection, caching strategies, and request batching all become real concerns once you're at scale.

The teams that don't think about this before launch are the ones getting surprised by their inference bills in month two.

A defined scope with enforcement. A demo agent is usually built around a specific, narrow task. Production agents get used for things the designer didn't intend. Users push the boundaries. The agent needs to know what it's supposed to do and be able to decline or redirect when asked to do something outside that boundary.

Scope enforcement is not about being restrictive. It's about being reliable. An agent that confidently attempts tasks it wasn't designed for produces unpredictable outputs. An agent with clear scope produces consistent ones.

The production checklist before you ship

Before an AI agent is ready for production, it should pass a basic set of gates:

Out-of-scope testing. Has the agent been tested with inputs that are outside its intended scope? Does it respond gracefully, or does it produce confident nonsense?

Failure injection. What happens when a tool call fails? When the API times out? When the model returns an error? Has each failure mode been tested explicitly?

Observability setup. Is every decision the agent makes logged? Can you reconstruct exactly what happened for any given interaction? Do you have alerts for anomalies?

Cost modeling. What is the expected cost per interaction at your anticipated volume? Is that acceptable? What's the plan if usage spikes?

Scope enforcement. Can you demonstrate that the agent declines out-of-scope requests correctly? Is the scope definition part of the system prompt and part of the eval suite?

If any of these don't have a clear answer, the agent is not production-ready. It's a prototype that will behave like a prototype once real users get to it.

What this means for how you build

The implication is that production readiness is not something you add at the end. It's something you design for from the start.

The teams that ship reliable AI agents treat production requirements as first-class design constraints. The failure modes are part of the architecture before the first line of code. The observability is built in, not bolted on. The scope is defined before the prompt is written.

That takes more time upfront. It saves multiples of that time in production debugging.

If you want a quick self-check before a real review, run the vibe-coded app production readiness checklist + fix prompts. It covers the same production controls in a faster, builder-friendly format.

If you're building an AI agent and want to make sure the architecture is production-ready before you commit to a full build, book a discovery call. We design for production from day one, and we'll tell you what we'd need to see before recommending a build.