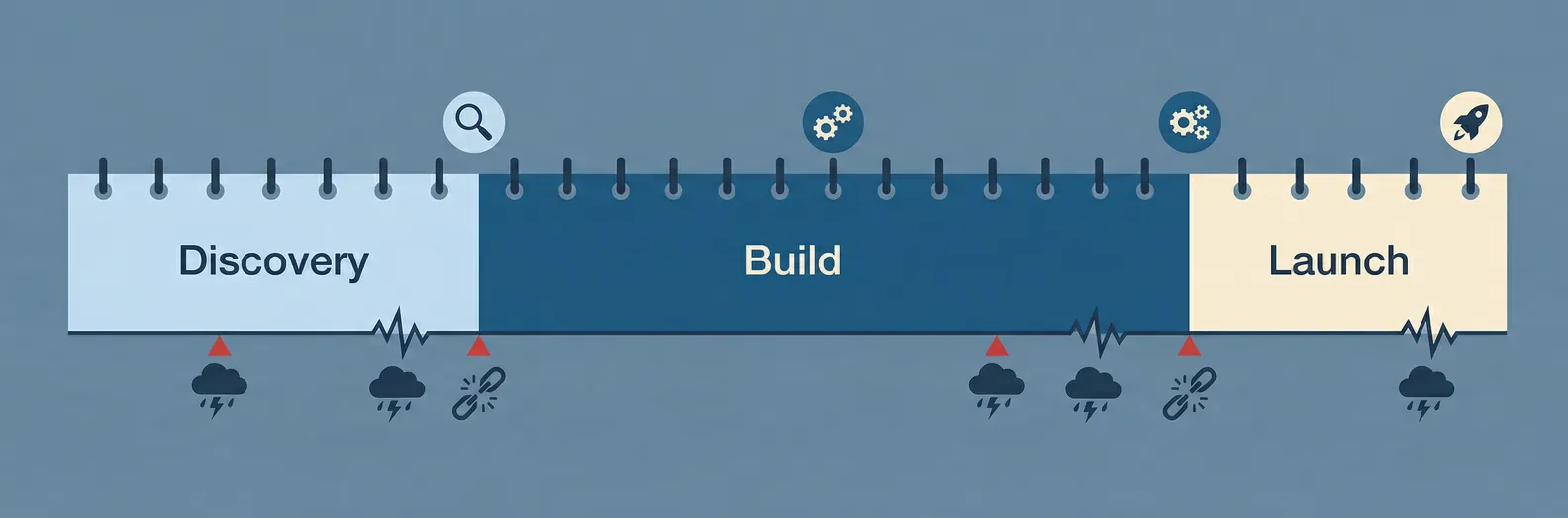

One of the most common questions we hear before a project starts: "How long is this going to take?" The answer depends on what you're building, but the first 90 days follow a pattern that's consistent enough to be useful as a planning guide.

Here's how it usually goes.

Days 1-14: Discovery

Before any code is written, you need a shared understanding of the problem. This phase is about asking questions that feel obvious but rarely get answered clearly before a project kicks off.

What exactly does the current workflow look like? Where does data come from, and where does it go? What does success look like in six months, and how would you measure it? Who else in the organization touches this process?

At the end of two weeks, the output is a project brief that describes the problem, the proposed solution, the data requirements, and the metrics that will define success. Nothing technical yet. Just a document that everyone involved can read and agree on.

Most clients are surprised by how much they learn in this phase. The scope you thought you had at day one is almost always different from the scope you have at day fourteen, and that's fine. The goal is to find those differences before you've built anything.

Days 15-30: Data and architecture

Now you know what you're building. The next two weeks are about figuring out if you have what you need to build it.

On the data side: auditing what exists, identifying gaps, getting access to source systems, and running quality checks. Data problems found here are manageable. Data problems found in week eight are expensive.

On the architecture side: making the key technical decisions. Which models, which infrastructure, what the integration points look like, how failure will be handled. A lightweight technical design document comes out of this phase, not a 40-page spec, but enough to guide development and flag risks early.

You'll also do your first round of model testing here. Feeding real data into candidate approaches and seeing how they perform before committing to a full build. This is where you find out whether your problem is straightforward or whether it's going to take more tuning than expected.

Days 31-60: Build and iterate

This is the actual development phase, and it's shorter than most people expect, because the first two phases did the hard work of removing ambiguity.

The first two weeks of this phase produce something functional that you can test with real inputs. Not production-ready. Not polished. But real enough to validate the core logic and surface edge cases that weren't visible on paper.

The second two weeks are iteration based on what you find. Adjusting the model, improving the prompts or fine-tuning, handling the edge cases, and getting the system to a state where it performs reliably on the inputs you actually see in your business.

The end of day 60 should give you something that works in a controlled environment, with a clear list of what remains before it's ready for production.

Days 61-90: Testing and deployment

The final phase is where you move from "it works" to "it works reliably, and we're confident."

This means staging environment testing, load and performance checks if volume matters, integration testing with the systems it connects to, and user acceptance testing with the people who will actually use it. It also means writing the runbooks: what to do when something breaks, who to call, how to restart a failed pipeline.

Deployment itself is usually not a single event. Staged rollouts, where the AI handles a subset of traffic first, are standard practice for anything business-critical. You monitor closely for the first week, compare the AI's outputs to the expected baseline, and expand gradually once you're confident.

By day 90, the system is live. Not done, because AI systems need ongoing monitoring and periodic retraining, but live and running.

What can make this longer

Three things extend this timeline reliably:

Data access problems. If getting data requires IT tickets, legal review, or a third-party vendor, two weeks can become six. Identifying these blockers in discovery and starting the access process early is the most important schedule risk you can manage.

Unclear success criteria. If you can't define what "working" means, iteration never ends. Getting specific on metrics in the first two weeks is not a nice-to-have.

Scope changes. Adding new requirements during the build phase is the most common reason AI projects run long. The discovery phase exists to prevent this. If something comes up mid-build, it usually goes on a list for phase two rather than getting added to the current scope.

What 90 days doesn't include

A 90-day timeline gets you a working system in production. It doesn't get you a mature one. Mature AI systems have months of production data, multiple rounds of model improvement, and refined edge-case handling that you can only develop through real-world use.

Plan for ongoing work after launch. The companies that get the most value from AI don't treat it as a project with an end date. They treat the initial build as the starting point and invest in improving it over time.

If you want to map out what a 90-day timeline would look like for your specific use case, book a discovery call. We'll walk through your workflow and give you a clear picture of what each phase would look like in practice.